Chink in Armor: Why Even Sites That Look Good Fail to Drive Business From Google

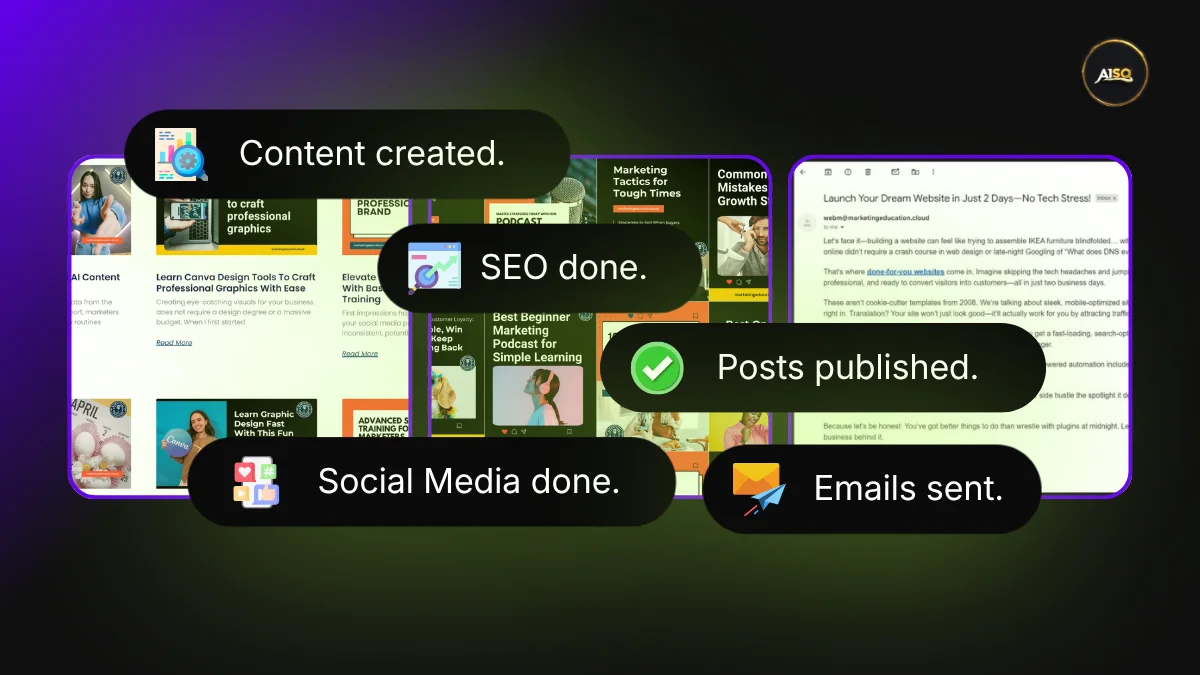

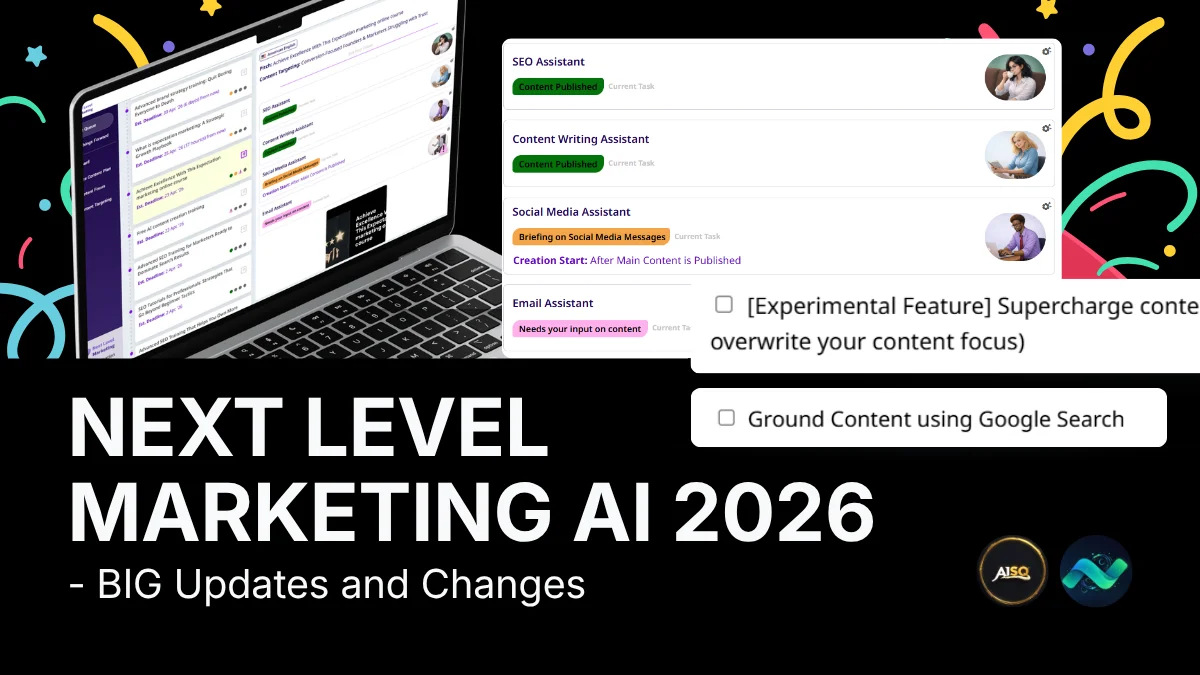

If you’re not top 10 on Google or Bing, you don’t exist in AI Search. A new website came our way for Florin Muresan’s Growth Program. Those 90 days are critical, because we get to fix even sites that look amazingly